Operating an AWS organization often leads to the need to create scripts to inventory resources (EC2 instances, RDS instances, S3 buckets…), remediate tags, delete unused resources….

When it comes to run these operation scripts across an AWS organization, parallelization is the key to speed up the process. Sequential processing of the accounts and regions in a unique script can take hours to finish. Thanks to Step Functions and Lambda, we can process all accounts and operated regions in minutes from an operator account. Cherry on the cake, it's serverless and it almost does not incur additional costs.

Why sequential scripts fail at scale

Before diving into the architecture, it's worth understanding why the naive approach breaks down quickly.

Suppose you manage 20 AWS accounts across 5 regions — a modest size for a growing organization. That gives you 100 account/region combinations. If each Lambda invocation takes 30 seconds on average (network calls to describe resources, API pagination, STS assume role overhead), a sequential script running in a single process takes 50 minutes to complete. At 50 accounts × 5 regions, you're looking at over 2 hours. At that point the data is stale before the run even finishes.

With Step Functions and a Map state, those 100 combinations run in parallel. The wall-clock time drops to roughly the duration of your slowest single invocation — typically 60–90 seconds regardless of org size, up to the concurrency limits discussed later.

There are other failure modes with sequential scripts too. A single throttled API call or a flaky network connection causes the entire run to stall or abort. You lose all progress and have to restart from scratch. Step Functions handles retries per-item without affecting the rest of the batch, and gives you a full execution history in the console to debug failures.

Cross-account roles

In order to execute actions in other accounts, we deploy cross-account roles in all accounts via Cloudformation Stackset. These cross-accounts roles are assumed by operation lambdas.

CloudFormation template for cross-account roles deployed organization-wide via StackSet

AWSTemplateFormatVersion: 2010-09-09

Description: Cross account roles for operations

Parameters:

OperationAccountId:

Type: String

Description: Account ID of the account where the role will be created

Resources:

OperationAdmin:

Type: 'AWS::IAM::Role'

Properties:

RoleName: operation-admin

AssumeRolePolicyDocument:

Statement:

- Action: 'sts:AssumeRole'

Effect: Allow

Principal:

AWS: !Sub 'arn:aws:iam::${OperationAccountId}:role/operation-admin-lambda'

Version: 2012-10-17

Description: Ops admin role assumed by Lambda functions from Operations Accounts

ManagedPolicyArns:

- 'arn:aws:iam::aws:policy/AdministratorAccess'

OperationReadonly:

Type: 'AWS::IAM::Role'

Properties:

RoleName: operation-readonly

Path: '/'

Description: Ops readonly role assumed by Lambda functions from Operations Accounts

AssumeRolePolicyDocument:

Statement:

- Action: 'sts:AssumeRole'

Effect: Allow

Principal:

AWS: !Sub 'arn:aws:iam::${OperationAccountId}:role/operation-readonly-lambda'

ManagedPolicyArns:

- 'arn:aws:iam::aws:policy/ReadOnlyAccess'

CloudFormation template for lambda roles deployed only in the operation account

AWSTemplateFormatVersion: 2010-09-09

Description: Cross account lambda roles for operations

Resources:

OperationAdminLambda:

Type: AWS::IAM::Role

Properties:

RoleName: operation-admin-lambda

Path: "/"

Description: "Allows lambda to assume role operation-admin in target accounts"

AssumeRolePolicyDocument:

Statement:

- Action: sts:AssumeRole

Effect: Allow

Principal:

Service: lambda.amazonaws.com

Policies:

- PolicyName: "assume-role"

PolicyDocument:

Statement:

- Action:

- sts:AssumeRole

Effect: Allow

Resource:

- arn:aws:iam::*:role/operation-admin

ManagedPolicyArns:

- arn:aws:iam::aws:policy/service-role/AWSLambdaBasicExecutionRole

OperationReadonlyLambda:

Type: AWS::IAM::Role

Properties:

RoleName: operation-readonly-lambda

Path: "/"

Description: "Allows lambda to assume role operation-readonly in target accounts"

AssumeRolePolicyDocument:

Statement:

- Action: sts:AssumeRole

Effect: Allow

Principal:

Service: lambda.amazonaws.com

Policies:

- PolicyName: "assume-role"

PolicyDocument:

Statement:

- Action:

- sts:AssumeRole

Effect: Allow

Resource:

- arn:aws:iam::*:role/operation-readonly

ManagedPolicyArns:

- arn:aws:iam::aws:policy/service-role/AWSLambdaBasicExecutionRole

Step machines

The step machines is split into 3 parts.

1. The Lambda fanout

Its aim is to fetch the account list from the organization master account, and build an array of object for each combination of account/region where we want to execute our operation script.

Output example:

{

"AccountRegions": [

{

"AccountId": "2345678765434545343432",

"AccountName": "project-1-prod",

"Region": "eu-west-1"

},

{

"AccountId": "2345678765434545343432",

"AccountName": "project-1-prod",

"Region": "us-east-1"

},

{

"AccountId": "987654345567575364564",

"AccountName": "project-2-dev",

"Region": "eu-west-1"

},

{

"AccountId": "987654345567575364564",

"AccountName": "project-2-dev",

"Region": "us-east-1"

}

]

}

The object could contain also other properties required by the worker lambda.

2. The workers map

The map that iterates through the array to execute a Lambda worker with concurrency. As of this writing, the maximum execution is 50. This step can contain at least one Lambda, but you can add tasks if you need to split your process even more.

3. The result handler

As a last step, we can export the results of the map as a csv file into S3, send an email, a Slack notification….

State machine definition (ASL)

Here is the full Amazon States Language definition for the three-state machine. Copy this into your Step Functions console or deploy it via CloudFormation/CDK.

{

"Comment": "AWS Organizations operator framework",

"StartAt": "Fanout",

"States": {

"Fanout": {

"Type": "Task",

"Resource": "arn:aws:lambda:eu-west-1:ACCOUNT_ID:function:org-operator-fanout",

"ResultPath": "quot;,

"Retry": [

{

"ErrorEquals": ["Lambda.ServiceException", "Lambda.AWSLambdaException", "Lambda.SdkClientException"],

"IntervalSeconds": 2,

"MaxAttempts": 3,

"BackoffRate": 2

}

],

"Catch": [

{

"ErrorEquals": ["States.ALL"],

"Next": "FanoutFailed",

"ResultPath": "$.error"

}

],

"Next": "WorkersMap"

},

"WorkersMap": {

"Type": "Map",

"ItemsPath": "$.AccountRegions",

"MaxConcurrency": 15,

"Parameters": {

"AccountId.quot;: "$.Map.Item.Value.AccountId",

"AccountName.quot;: "$.Map.Item.Value.AccountName",

"Region.quot;: "$.Map.Item.Value.Region"

},

"Iterator": {

"StartAt": "Worker",

"States": {

"Worker": {

"Type": "Task",

"Resource": "arn:aws:lambda:eu-west-1:ACCOUNT_ID:function:org-operator-worker",

"Retry": [

{

"ErrorEquals": ["Lambda.ServiceException", "Lambda.AWSLambdaException", "States.TaskFailed"],

"IntervalSeconds": 5,

"MaxAttempts": 2,

"BackoffRate": 1.5

}

],

"Catch": [

{

"ErrorEquals": ["States.ALL"],

"Next": "WorkerFailed",

"ResultPath": "$.error"

}

],

"End": true

},

"WorkerFailed": {

"Type": "Pass",

"Result": "Worker failed — skipped",

"End": true

}

}

},

"ResultPath": "$.Results",

"Next": "ResultHandler"

},

"ResultHandler": {

"Type": "Task",

"Resource": "arn:aws:lambda:eu-west-1:ACCOUNT_ID:function:org-operator-result-handler",

"End": true

},

"FanoutFailed": {

"Type": "Fail",

"Error": "FanoutError",

"Cause": "Fanout Lambda failed after retries"

}

}

}

Key points in this definition: the WorkerFailed Pass state absorbs individual account failures rather than failing the entire execution. The fanout failure is a hard stop — if you can't build the account list, there's nothing to parallelize.

The fanout Lambda

The fanout is the piece that everyone asks about. It calls organizations:ListAccounts (or organizations:ListAccountsForParent if you want to scope to a specific OU), then cross-products the results with your target region list.

import boto3

import os

def handler(event, context):

org_client = boto3.client("organizations")

target_regions = os.environ.get("TARGET_REGIONS", "eu-west-1,us-east-1").split(",")

accounts = []

paginator = org_client.get_paginator("list_accounts")

for page in paginator.paginate():

for account in page["Accounts"]:

# Skip suspended or closed accounts

if account["Status"] != "ACTIVE":

continue

accounts.append({

"AccountId": account["Id"],

"AccountName": account["Name"],

})

account_regions = []

for account in accounts:

for region in target_regions:

account_regions.append({

"AccountId": account["AccountId"],

"AccountName": account["AccountName"],

"Region": region.strip(),

})

return {"AccountRegions": account_regions}

A few things worth noting here. First, always filter out non-ACTIVE accounts — suspended accounts will cause every sts:AssumeRole call to fail with AccessDenied, and you don't want those errors polluting your results. Second, keep TARGET_REGIONS as an environment variable so you can change scope without redeploying code. Third, if you want to scope the run to a specific OU rather than the whole org, replace list_accounts with list_accounts_for_parent and pass the ParentId from the event.

The Lambda executing this code needs organizations:ListAccounts (or organizations:ListAccountsForParent) on the management account or a delegated admin account. The standard approach is to deploy the fanout Lambda in a dedicated operations account that has been designated as a delegated administrator for AWS Organizations.

Error handling and retries

There are three failure categories to plan for in this framework.

STS assume role failures. These happen when an account isn't fully provisioned yet (the StackSet hasn't finished deploying the cross-account role), when an SCP is blocking sts:AssumeRole in the target account, or when the account has been suspended. The worker Lambda should catch botocore.exceptions.ClientError with error code AccessDenied and return a structured error payload rather than raising an exception. This lets the Map state collect partial results instead of failing the whole iteration.

import boto3

import botocore

def assume_role(account_id: str, role_name: str, region: str):

sts = boto3.client("sts")

try:

creds = sts.assume_role(

RoleArn=f"arn:aws:iam::{account_id}:role/{role_name}",

RoleSessionName="org-operator",

)["Credentials"]

return boto3.Session(

aws_access_key_id=creds["AccessKeyId"],

aws_secret_access_key=creds["SecretAccessKey"],

aws_session_token=creds["SessionToken"],

region_name=region,

)

except botocore.exceptions.ClientError as e:

raise RuntimeError(f"AssumeRole failed for {account_id}: {e.response['Error']['Code']}")

Lambda transient failures. The Step Functions retry configuration on the Worker state handles Lambda.ServiceException and Lambda.AWSLambdaException. These cover cold start timeouts and Lambda service-side errors. Two retries with a 1.5x backoff is generally enough — if a worker is still failing after that, the account has a real problem.

Step Functions payload limit. See the payload section below. This is the most common operational issue in production and deserves its own handling strategy.

The Catch block on the Worker state routes failures to a WorkerFailed Pass state that returns a static string. This means the Map always succeeds at the state machine level, and your result handler receives the full array including the failure markers. Log the failures to CloudWatch or write them to DynamoDB so you can triage them separately.

Concurrency tuning

The MaxConcurrency field in the Map state controls how many Lambda workers run simultaneously. The default in the example YAML above is 0, which means unlimited — Step Functions will invoke all items concurrently up to the Lambda concurrency limit in your account.

In practice, MaxConcurrency: 0 is fine for small orgs (under 20 accounts) but becomes dangerous at scale. Here's why:

- Lambda has a regional concurrency limit of 1,000 by default (soft limit, can be raised). If your fanout produces 200 items and each worker invokes several Lambdas internally, you can exhaust the concurrency pool and starve other workloads.

- AWS API rate limits per account and region are fixed. With unlimited concurrency, all workers hitting

ec2:DescribeInstancesinus-east-1simultaneously will generate throttling errors that cascade into retries and slow the overall run. - STS has a default rate limit of 100 requests/second for

AssumeRole. 200 concurrent workers all calling STS at start creates a spike that exceeds this.

A safe default for most organizations is MaxConcurrency: 10 to 20. To calculate the right value for your setup:

- Take your per-worker Lambda duration (say 30 seconds).

- Divide your acceptable total wall-clock time by that duration.

- That gives you the minimum concurrency needed. Add 20–30% headroom for cold starts.

For 100 account/region combinations with 30-second workers and a 3-minute target: 100 / (180 / 30) = ~17. Round to 20.

If you have a large org (100+ accounts) and need faster runs, the better lever is reducing per-worker duration by optimizing API calls and using async SDK clients — not pushing MaxConcurrency to its limit.

How to handle the payload limit

As your organization or the amount of resources grows, you will reach the 256 KB size limit of the task outputs. It often occurs after the map step because it joins the output of all the Lambda workers. FYI, I suppose that this limit is related to the Lambda asynchronous invocation payload limit.

Fanout output

Let's say you have common parameters for the Lambda workers. For example a list of resource ids such as Security Hub standard arns. Repeating these arns in the AccountRegions items would generate a too big payload. To only have them once, we will leverage the parameters property of the map to build the input payload for each concurrent workers.

Fanout output:

{

"StandardArns": [

"arn:aws:securityhub:REGION::standards/aws-foundational-security-best-practices/v/1.0.0",

"arn:aws:securityhub:REGION::standards/cis-aws-foundations-benchmark/v/1.4.0"

],

"AccountRegions": [

{

"AccountId": "2345678765434545343432",

"AccountName": "project-1-prod",

"Region": "eu-west-1"

},

{

"AccountId": "987654345567575364564",

"AccountName": "project-2-dev",

"Region": "eu-west-1"

}

]

}

Map definition:

Type: Map

ItemsPath: $.AccountRegions

Parameters:

AccountId.$: $.Map.Item.Value.AccountId

AccountName.$: $.Map.Item.Value.AccountName

Region.$: $.Map.Item.Value.Region

StandardArns.$: $.StandardArns

Iterator:

StartAt: inventory

States:

inventory:

End: 'true'

Resource: arn:aws:lambda:eu-west-1:23456765432332435:function:securityhubInventory-47IgsXHr66xW

Type: Task

MaxConcurrency: 0

Next: export

Input worker example:

{

"AccountId": "2345678765434545343432",

"AccountName": "project-1-prod",

"Region": "eu-west-1",

"StandardArns": [

"arn:aws:securityhub:REGION::standards/aws-foundational-security-best-practices/v/1.0.0",

"arn:aws:securityhub:REGION::standards/cis-aws-foundations-benchmark/v/1.4.0"

]

}

Workers map output

One solution is to push the data in DynamoDB or S3, and read it from the last step.

You can either push your data to DynamoDB from your lambda or via the "arn:aws:states:::dynamodb:putItem" resource from Step Functions.

Task definition:

Type: Task

Resource: arn:aws:states:::dynamodb:putItem

Parameters:

Item:

accountId:

S.$: $.accountId

region:

S.$: $.region

standards:

S.$: $.standards

status:

S.$: $.status

TableName: MyInventoryTable

OutputPath: $.SdkHttpMetadata.HttpStatusCode

End: true

Notice the OutputPath to minimize the output payload size.

Cost breakdown

This pattern is close to free for most organizations. Step Functions pricing for Standard Workflows is $0.025 per 1,000 state transitions. Each execution goes through three states (Fanout → Map → ResultHandler), plus two transitions per item inside the Map (Worker → end). For 100 account/region combinations that's 3 + (100 × 2) = 203 transitions, costing roughly $0.005 per run.

Lambda pricing on top is negligible — 103 invocations (1 fanout + 100 workers + 1 result handler) at 30 seconds each with 512 MB memory is well within the free tier for monthly totals under 400 account-region-runs.

If you schedule this framework to run daily across 100 combinations, the monthly Step Functions cost is under $0.20. The DynamoDB writes for payload offloading add a few cents at most. The dominant cost is not compute — it's engineering time spent writing and maintaining the worker Lambdas.

One watch-out: if you use Express Workflows instead of Standard Workflows (for higher throughput), pricing switches to duration-based ($0.00001 per GB-second) with a minimum 1ms billing. For an org-wide inventory job that runs once or twice a day, Standard Workflows are cheaper and give you full execution history in the console, which is worth more than the marginal cost difference.

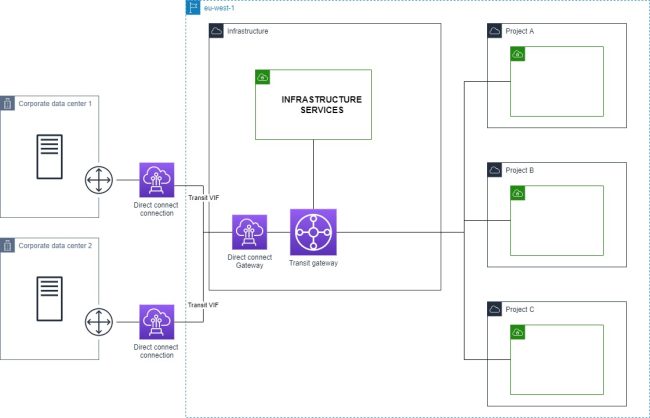

Visualizing the results

Once the framework is generating org-wide inventory data, a raw CSV or DynamoDB table only gets you so far. The data becomes more actionable when you can see the network topology it describes — which accounts have public NAT gateways, where VPC peering connections cross account boundaries, which security groups have overly permissive ingress rules.

VizCon reads from the same accounts using read-only cross-account roles (the same pattern deployed above) and renders the resulting infrastructure as an interactive diagram across all accounts in a single view. If your operator framework surfaces a list of unused NAT gateways or misconfigured security groups, VizCon lets you verify the blast radius visually before running a remediation pass — rather than correlating account IDs across spreadsheet rows.

What's next?

It depends on your needs. Step Functions is a very powerful orchestrator. Here are more example scripts:

- Inventories: unused EBS volumes, unused NAT gateways, lambda functions, VPC, accounts, Cloudfront distribution, tags, public IPs, public endpoints

- Compliance: tags remediation, security groups open ingress remediation

- Security: security hub standards and controls management, Inspector activation

Code example available on github.